Before visiting the Jinesis Lab, I read about Causal AI Scientist!

Contents:

What does Causal AI Scientist do? (automate causal inference)

Causal AI Scientist as case study in symbolic-connectionist synergy

What does Causal AI Scientist do?

Inputs:

Causal AI Scientist takes three inputs:

Dataset (CSV)

e.g. MVP dataset for a personal productivity intervention:

person,intervention,productive_hours,hours_slept,pre_post_intervention

1,1,6.3,8.2,1

2,0,5.2,7.3,0

...

Treatment: intervention_adopted

Outcome: productive_hours

Covariate: hours_slept

Temporal: pre_post_intervention

Unit: personMetadata, e.g.:

dataset_name: personal_productivity_experiment

description:

Logged productivity and sleep data for individuals before and after adopting a productivity intervention.

notes:

source: “Self-tracked app data”

collection_period: “March–June 2025”Natural-language query

e.g. “Does this intervention improve productivity?”

Output:

Causal AI Scientist chooses a causal inference method, runs a script implementing that method, and returns a natural-language response to the original query. This response contains a causal estimate, its statistical significance, and some notes on assumptions and limitations of the chosen method.

Causal AI Scientist as case study in symbolic-connectionist synergy

The Bitter Lesson: The observation and prediction of connectionist AI replacing symbolic AI across a wide range of domains

Symbolic AI: Explicit knowledge representation and logical reasoning using human-readable symbols and rules

Connectionist AI: Deep learning / neural networks

Causal AI Scientist

┌────────────────────────────────────────────────────────┐

│ CONNECTIONIST LAYER │

│ (semantic understanding & natural language reasoning)│

└────────────────────────────────────────────────────────┘

│

▼

[1] input_parser → extract query type, entities

│

▼

[2] dataset_analyzer → infer variable roles via LLM heuristics

│

▼

[3] query_interpreter → formalize causal query (T, Y, X, Z, time)

────────────────────────────────────────────────────────────────────────

transition: “neural interpretation → symbolic reasoning”

────────────────────────────────────────────────────────────────────────

┌────────────────────────────────────────────────────────┐

│ SYMBOLIC CORE │

│ (deterministic causal logic and econometric methods) │

└────────────────────────────────────────────────────────┘

│

▼

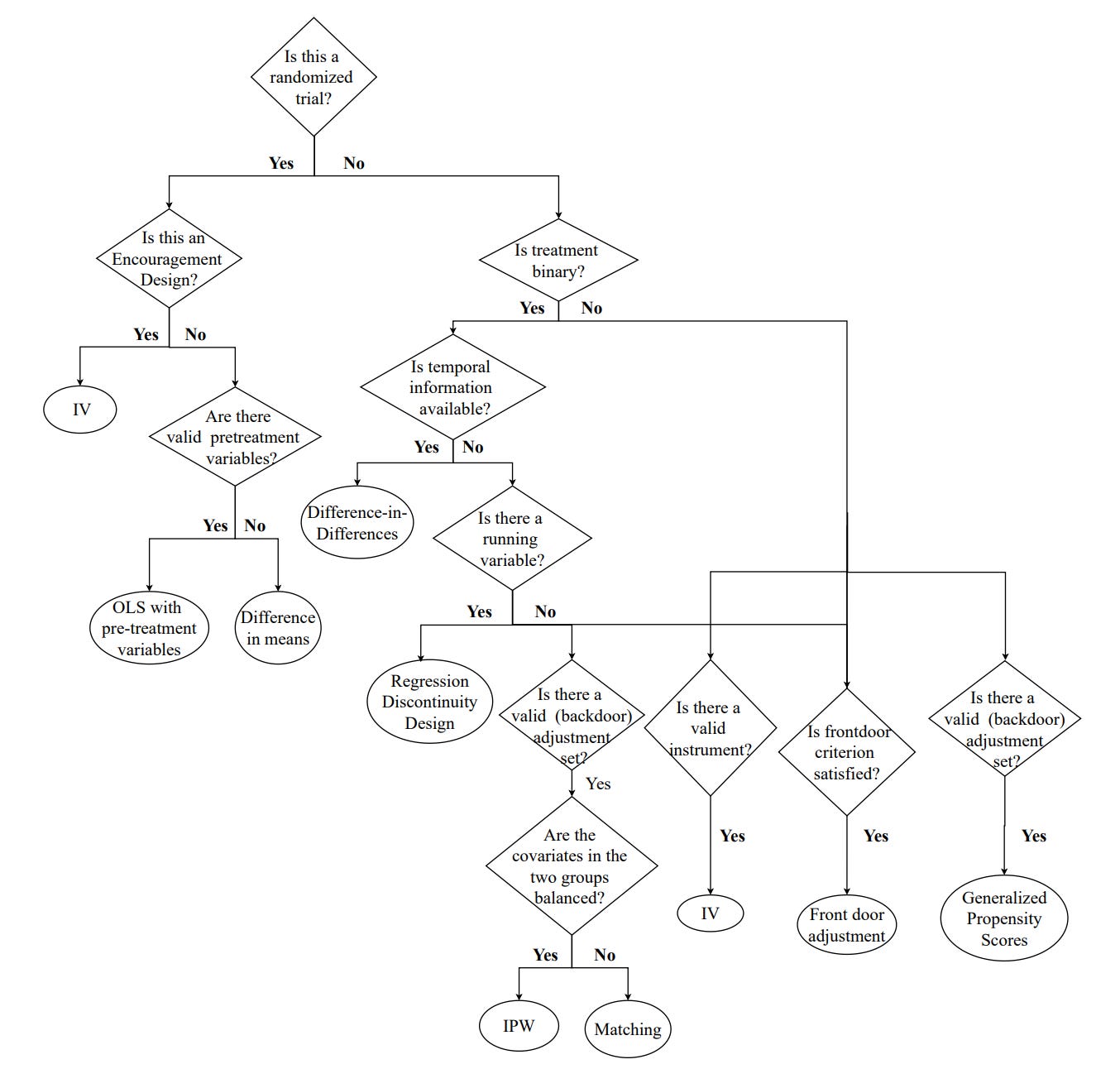

[4] method_selector → traverse fixed decision tree

│

▼

[5] method_validator → apply rule-based assumption checks

│

▼

[6] method_executor → run pre-verified statistical template

────────────────────────────────────────────────────────────────────────

transition: “symbolic reasoning → neural communication”

────────────────────────────────────────────────────────────────────────

┌────────────────────────────────────────────────────────┐

│ CONNECTIONIST OUTPUT LAYER │

│ (language generation & explanatory synthesis) │

└────────────────────────────────────────────────────────┘

│

▼

[7] explanation_generator → generate narrative interpretation

│

▼

[8] output_formatter → assemble structured + natural outputs

‘Bitter Lesson’ Extension:

To what extent does symbolic core outperform connectionist core?

Delightfully, you could run Causal AI Scientist with original symbolic core vs. alternative connectionist core, use an LLM to score outputs of each, and use Causal AI Scientist to quantify the effect size of this intervention.

You could then try getting connectionist core to perform as well as symbolic core. You could train an LLM on dataset-method pairs without giving it access to this decision tree. How long would it take to re-derive the tree?

This decision tree can, after all, now be used to create a vast amount of ‘causal inference’ synthetic data. Which leads me to…

Automated causal inference for quantified self enthusiasts

I’m particularly interested in causal inference pertaining to ‘quantified self’. Currently, I track a mix of quantitative and qualitative data in a spreadsheet—

—but I’m also interested in passive tracking such as keystroke tracking, EEG, EMG, EOG, etc.. I have DoneThat and RescueTime running.

If available at a reasonable price point, I’d buy wearables that track my every move. I’d like insights into which actions work really well for me. All kinds of latent variables (e.g. hydration) influence cognition and focus.

Now that causal inference is ~mostly automated, great opportunities await the first startup to do comprehensive passive data tracking and return the highest-leverage interventions to help you achieve your goals.

Causal AI Scientist as case study in benchmark response

Causal AI Scientist hit SOTA on:

CauSciBench, a real-world benchmark designed to evaluate the ability of LLMs to perform causal analysis on tabular datasets.

A benchmark operates like a ‘pull incentive’ / irresistible challenge for researchers to perform well on it. Or (more realistically) researchers look at problems they could address, find one with a suitable benchmark, and end up investing more time and energy into it. As previously discussed:

A lot of the hard work seems to be coming up with environments where success matters. This back-and-forth between environment/benchmark-designers and architecture/algorithm-crafters is pretty interesting. Each constantly striving to outdo the other. Co-evolution.

I’m excited about good uses of automated benchmark creation—helps you create pull incentives, and then AI can automate the process of hill-climbing on these benchmarks. This seems like a blueprint for MVP open-endedness—spinning up well-specified benchmarks at different levels of granularity, then setting AI Villages to improve on these with amateur human input.

Who works on this?

This exploration of automated causal inference is truly insightful. How do you foresee the balance between explicit knowledge representation and the connectionist layer evolving with future advancements in LLMs?